If you have spent any time trying to automate your business with AI, you have likely fallen into the "mega-prompt" trap. You write a three-page system prompt detailing your company's brand voice, your formatting rules, your specific codebase standards, and your step-by-step operational workflows.

You hit enter, and the system grinds to a halt. The AI starts forgetting instructions, hallucinating details, and producing muddy, generic work.

The era of massive prompt engineering is over, replaced by a much more surgical discipline: Context Engineering. The secret to building a lightning-fast, highly accurate Claude system isn't packing more information into the prompt—it is a design principle called progressive disclosure.

Here is how to stop treating your AI like a storage drive and start architecting a lean, high-signal system.

The Physics of "Context Rot"

To understand why massive prompts fail, you have to understand Claude's finite "attention budget".

Large language models (LLMs) suffer from an architectural constraint called "context rot": as the number of tokens in the context window increases, the model's ability to accurately recall specific information decreases. Because of how transformer architecture works under the hood, every single word in a prompt must be mathematically compared to every other word. If you double the length of your prompt, you quadruple the computational work.

Think of the context window like packing a backpack for a hike. If you bring your entire house, you might technically have everything you own, but you will never be able to find your compass when you actually need it.

The goal of context engineering is to find the smallest possible set of high-signal tokens that maximize the likelihood of your desired outcome. To do this, we use progressive disclosure: loading information in stages only when the AI actively needs it.

Layer 1: The Core Foundation (CLAUDE.md)

The first layer of progressive disclosure is the CLAUDE.md file. This is the root instruction manual for your project, loaded into the context window at the start of every session.

Because this file is always active, it must be fiercely protected.

Keep it strict: Target a maximum of 200 lines per

CLAUDE.mdfile. Longer files consume more context and actively reduce the model's adherence to your instructions.Keep it structured: Use markdown headers and bullet points; organized sections are much easier for Claude to follow than dense paragraphs.

Keep it verifiable: Do not use vague, "high-altitude" guidance. Instead of saying "Format code properly," explicitly state "Use 2-space indentation".

Layer 2: The "VIP Bouncers" (Path-Specific Rules)

If you can't put all your company's operational rules into the main CLAUDE.md, where do they go? They go into the .claude/rules/ directory.

Instead of a single monolithic file, you break your instructions into modular, topic-specific files (e.g., api-design.md or brand-voice.md). By using YAML frontmatter at the top of these files, you can scope rules to specific file paths.

For example, you can configure a rule so it only triggers when Claude opens a file ending in **/*.ts. These conditional rules act like VIP bouncers for your context window: the instructions do not get into the "club" (the active memory) unless the specific required file type is present. This drastically reduces noise and saves valuable context space.

Layer 3: Agent Skills (On-Demand Expertise)

The ultimate expression of progressive disclosure is Agent Skills. Skills are modular capabilities—packaged workflows, instructions, and scripts—that transform Claude from a general-purpose assistant into a specialized expert.

When an LLM generates output without highly specific procedural guidance, it defaults to the safest, most common statistical patterns in its training data—a problem Anthropic engineers call "distributional convergence". This is what causes generic "AI slop": boring fonts, muddy design choices, and flat layouts.

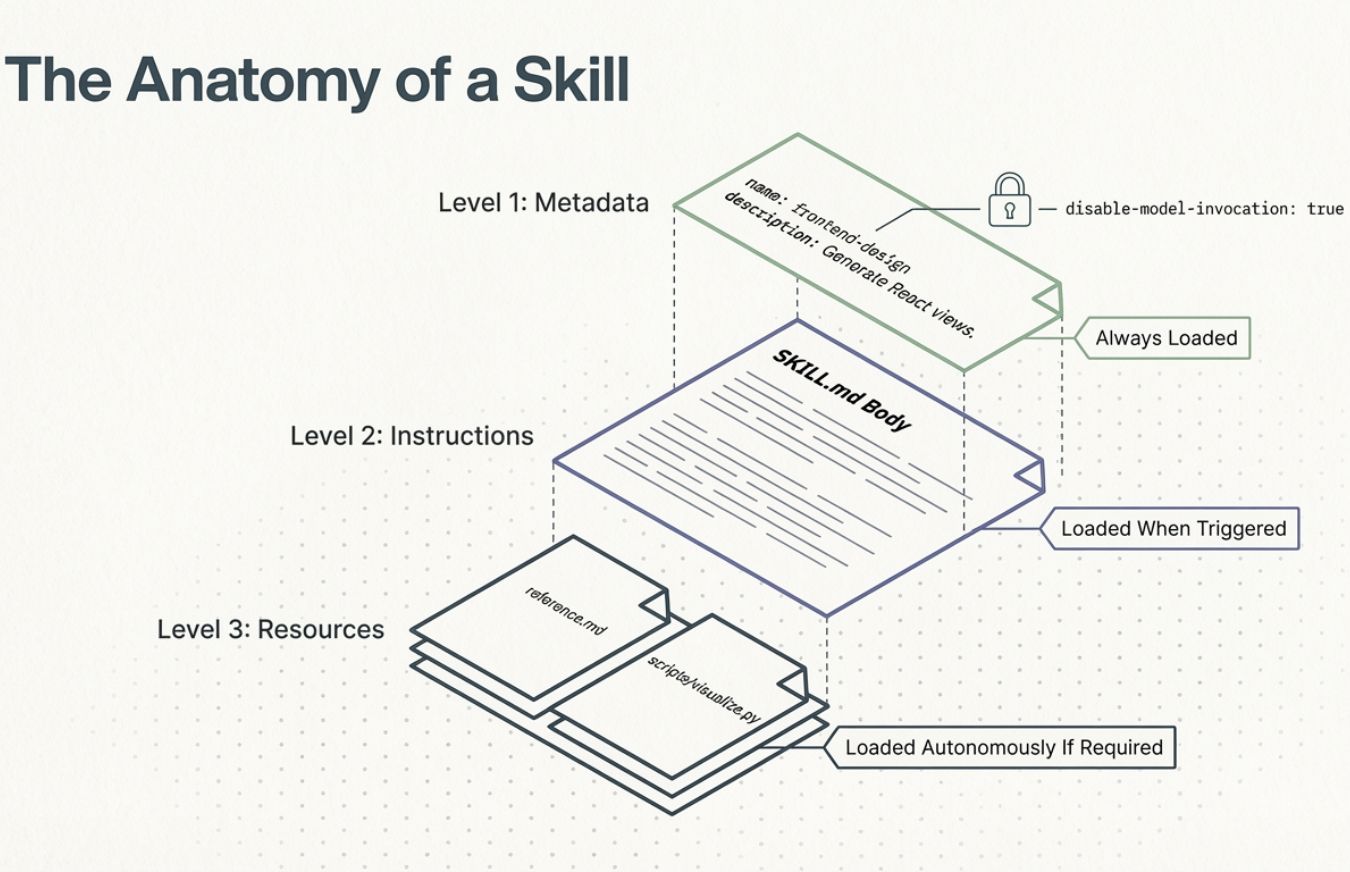

Skills cure this by injecting intense, senior-level expertise on demand. They utilize a brilliant three-level loading system:

Level 1 (Metadata): At startup, Claude only loads the Skill's name and a brief description into its system prompt (usually under 100 tokens). Claude simply knows the tool is in its toolkit.

Level 2 (Instructions): If you ask Claude to perform a task matching that description, it actively triggers the Skill and loads the main

SKILL.mdinstructions into its working memory.Level 3 (Resources): If the Skill is complex, it can contain bundled scripts or reference files. Claude can choose to navigate and open these deeper files only if the specific task requires it.

By using Skills, you don't have to carry a graphic designer's color wheel in your working memory when you are just asking Claude to debug a Python script. The expert is simply waiting down the hall, ready to be called upon.

The Lean AI Philosophy

Building a lightning-fast Claude system means abandoning the instinct to over-explain upfront. By utilizing CLAUDE.md for foundational rules, .claude/rules/ for path-specific triggers, and Agent Skills for on-demand expertise, you build an architecture that naturally protects the AI's attention budget.

You are no longer just typing prompts and hoping for the best; you are architecting a modular, high-signal system that executes tasks perfectly, every single time.